Goal Cascades Are Not About the Framework

Most teams do not have a goal-setting problem because they picked the wrong framework. They have a goal-setting problem because their work is not connected to feedback.

I hear a familiar argument whenever teams try to get more serious about planning. Should we use OKRs? Should we use OGSM?

And that conversation naturally leads into more granular questions. Are bets different from strategies? Where does the roadmap fit? What goes in our backlog?

Creating shared language is important because teams need some way to reason together. But language can also become a hiding place. The more energy a team spends debating labels, the easier it is to avoid the harder question: can we explain how today’s work moves us closer to the outcome we said we wanted?

That is the job of a goal cascade. A goal cascade is not a hierarchy of documents. It is a chain of assumptions.

At the top, you name the outcome you want. Underneath it, you name the path you believe will get you there. Closer to the work, you name the faster signals that should tell you whether the path is behaving the way you expected.

If the cascade is healthy, teams can move between those levels without losing the thread. A backlog item connects to a roadmap bet. A roadmap bet connects to a strategy. A strategy connects to an objective. Each layer has a reason to exist because each layer helps the team make a better decision.

If the cascade is unhealthy, the words still look right. The slide still has objectives, goals, strategies, measures, key results, initiatives, bets, and metrics. But the connection between them is mostly decorative. The team can recite the framework, but cannot use it to decide what to cut, what to protect, or what to learn next.

That is where goal systems usually break: not in the naming, but in the missing feedback loop.

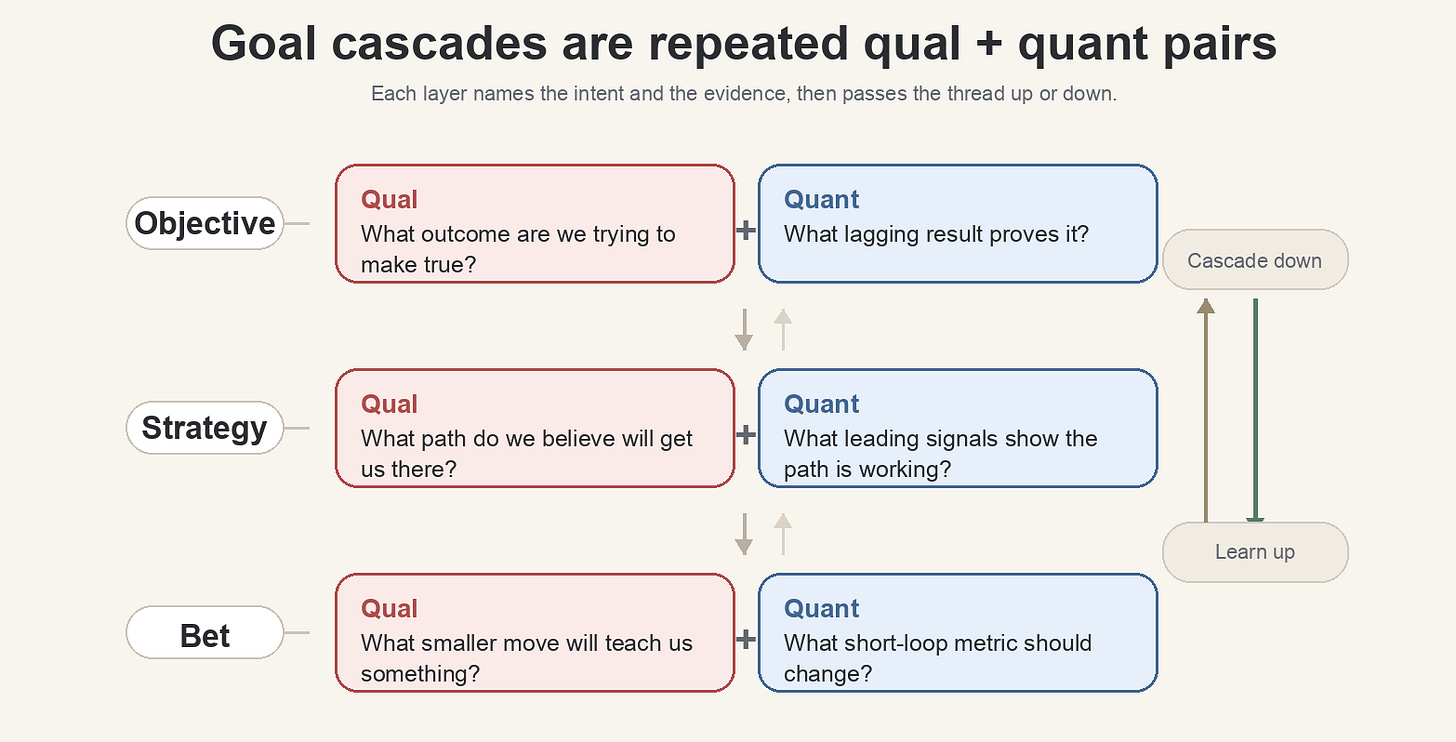

Illustration of qualitative and quantitative goal pairs cascading between objective, strategy, and bet levels

Start with the pair: intent and evidence

Behind any goal framework is a common principle: every useful goal needs two parts.

It needs a qualitative description: what are we trying to make true?

It also needs a quantitative description: how will we know whether it became true?

Different frameworks name this pair differently. In OGSM, the objective is the qualitative statement and the goal is the quantitative counterpart. In OKRs, the objective is the qualitative statement and the key results are the quantitative counterpart. The labels matter less than the pattern.

Qualitative without quantitative becomes aspiration. Quantitative without qualitative becomes metric theater. Together, they create a usable goal: a direction with a way to inspect progress.

Take a simple example.

“We want to create a cohesive, high quality customer experience” is a useful direction, but by itself it is not enough. Different people can hear that sentence and make different choices. A designer may think about consistency. An engineer may think about stability. A product manager may think about conversion. A support team may think about fewer complaints.

None of those interpretations is wrong, which is exactly the problem. The phrase needs a measuring partner.

A team might choose signals like pages loading under 2.5 seconds. Or the team might choose a broader composite signal, like Lighthouse scores above 90.

Those numbers are not magic. They are not the customer experience itself. But they give the team something inspectable, which means the conversation can move from opinion to evidence.

That is the first useful move in any cascade: pair the thing you want to make true with the evidence that would tell you whether it is becoming true.

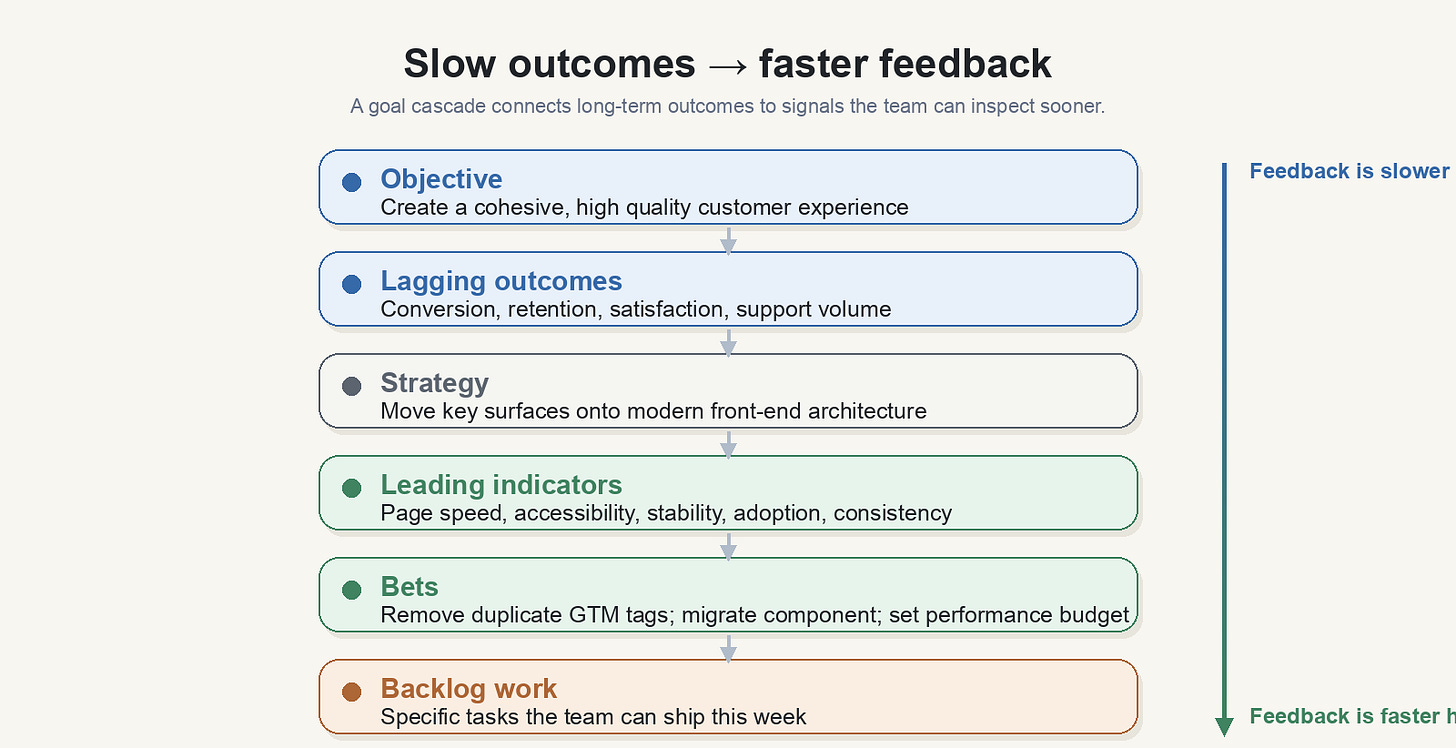

The cascade connects slow outcomes to faster feedback

The biggest difference between long-term goals and day-to-day work is feedback speed.

A long-term objective sets direction, but feedback is slow. “Create a better customer experience” may take years to fully prove. Revenue, retention, conversion, repeat visits, support volume, and brand trust all matter, but many of them move slowly or are hard to attribute cleanly.

Closer to the work, feedback gets faster. A team can measure whether a page got faster this week. It can measure whether responsiveness improved. It can measure whether duplicate tags were removed. It can measure whether a component migrated to a shared architecture. Those signals are not the final outcome, but they can tell the team whether the chosen path is behaving as expected. That is the purpose of leading indicators.

A lagging measure tells you whether you got there. A leading measure tells you whether you seem to be getting closer.

The road trip analogy is simple because it works. If the goal is to arrive in Washington in time for a wedding, the lagging measure is whether you arrive on time. The leading measures are the things you can inspect along the way: miles traveled, time elapsed, fuel remaining, traffic, detours.

No one mistakes miles traveled for the wedding. But they are important signals as you make progress.

Work is the same. A team may not be able to prove revenue impact until more of the funnel is on a new platform. That does not mean the team should fly blind. It means the team should measure the inputs it believes will eventually matter: page speed, stability, accessibility, adoption, reliability, or whatever else the strategy depends on.

A good cascade makes that belief visible. It says, “We believe this faster signal is connected to that slower outcome.” The belief may be wrong, but now it can be inspected.

Strategy is the path, not the wish

A strategy should explain how the team believes it will achieve the objective.

If the objective is a cohesive, high quality customer experience, one strategy might be to create responsive digital experiences on a stable modern front-end architecture. More specifically, a team might decide to move product surfaces onto a modern framework because shared architecture should make performance, consistency, and maintainability easier to improve.

That is a strategy because it chooses a path.

It also implies tradeoffs. If the path is monorepo adoption, then some work deserves protection even when it does not immediately look like feature output. Migration work, tooling, standards, documentation, and developer experience are not side quests. They are part of the operating theory.

This is where teams often lose nerve. The objective sounds customer-facing, but the strategy may require platform work. The lagging outcome may be conversion or customer satisfaction, while the leading work may be build pipelines, app migration, performance budgets, and fewer duplicate tags.

Without a cascade, that work is easy to dismiss as internal plumbing.

With a cascade, the team can explain the mechanism: better architecture should improve speed and consistency; speed and consistency should improve the customer experience; improved customer experience should support the business outcome.

The chain may be wrong. But at least now the team is debating the right thing. Not whether platform work “counts,” but whether this platform work is the right path to the outcome.

Bets are how strategy learns

Teams often get stuck trying to distinguish strategies from bets. The distinction is mostly time horizon.

A strategy is a longer-running path. “Move teams onto the monorepo” may take a year. “Create a faster, more stable customer experience” may take longer. These are large directional choices, and they need sustained attention.

A bet is a smaller move inside that path. It is a learning move. It says: if we make this specific change, we expect this specific signal to move.

For example: if we remove duplicate Google Tag Manager tags from a page, we expect site speed to improve by 500 milliseconds.

That is a bet. It is useful because the feedback loop is short. The team does not have to wait a year to learn something. It can run the change, inspect the signal, and decide what to do next.

This does not make bets separate from strategy. Bets are how strategy learns.

A bet moves the roadmap. The roadmap moves the strategy. The strategy moves the objective. Or at least that is the theory.

The cascade keeps the theory honest by making each assumption visible enough to challenge. If the tag cleanup does not improve speed, the team has learned something about the mechanism. If speed improves but conversion does not, the team has learned something else. Either way, the work produced information instead of just activity.

Roadmaps and backlogs translate the cascade into work

One common mistake is trying to stuff everything into the framework.

The OGSM should not contain the entire roadmap. The OKR should not become a backlog. A goal-setting system is not a project inventory.

The goal system should answer:

What outcome are we trying to create?

How will we know?

What path do we believe will get us there?

What signals will tell us whether that path is working?

The roadmap answers a different question:

What sequence of work do we believe will make progress against that path?

The backlog is even closer to the ground:

What specific work needs to happen next?

These layers should inform each other, but they should not collapse into one another. When they collapse, teams lose the ability to reason at the right level.

A backlog item is too small to carry the whole strategy. A strategy is too broad to tell an engineer exactly what to build next. A roadmap translates between them.

That translation is where a lot of planning work either becomes useful or becomes theater.

If the roadmap is connected to the cascade, a team can explain why migration work belongs next to customer-facing work. It can explain why a performance budget matters. It can explain why removing duplicate tags, improving responsiveness, or standardizing components is not just cleanup. Those items are small because work has to be small enough to do, not because the outcome is small.

If the roadmap is disconnected from the cascade, the backlog becomes a list of things people hope are important.

Use any framework, but keep the operating principle

This is why the framework matters less than the operating principle underneath it.

You can use OKRs. You can use OGSM. You can use bets.

You can use a roadmap with outcomes and measures. You can use your own language if the team understands it.

But the principle should stay the same:

Pair qualitative intent with quantitative evidence.

Connect slow outcomes to faster feedback.

Make the strategy explicit enough to expose tradeoffs.

Use bets to learn before the lagging outcome arrives.

Keep the roadmap and backlog connected to the outcome, without confusing them for the outcome.

The point is not to make planning look clean. The point is to make decisions easier when reality gets messy. Because reality is messy. Dependencies slip, metrics don’t move like we expect, customers don’t stay in the happy path.

A good cascade does not prevent that. It gives the team a way to respond without losing the plot. If the objective is clear, the team can adjust the path. If the strategy is explicit, the team can inspect whether it is still working. If the measures are leading indicators instead of vanity metrics, the team can learn before it is too late. If the backlog is connected to the roadmap, the team can explain why today’s work matters.

The test is: when the plan starts to slip how well can the team still explain what it is learning and what it will change next.